During the dog days of summer, when the markets are typically choppy, it can be difficult to find appealing directional trade candidates. When markets are choppy you can look for market neutral strategies to make income and stay active in your trading.

During the dog days of summer, when the markets are typically choppy, it can be difficult to find appealing directional trade candidates. When markets are choppy you can look for market neutral strategies to make income and stay active in your trading.

Pairs trading is a market neutral strategy where you look to generate income based on the value of one asset relative to another. Pair trading is a relative value strategy, as it does not depend on the outright direction of the broader markets but instead produces returns based on the ratio between two different assets.

Asset pair trades, other than currency pairs, are transacted by simultaneously initiating long and short positions in an effort to benefit from the change in the ratio of one asset by another. Assets that you can include in your pair trading strategy include commodities, indices, stocks, and of course currencies.

Finding Asset Pairs to Trade

There are dozens of assets pairs to trade, but to enjoy success, you want to base your strategy around pairs that move in tandem. Currencies, commodities, indices and stocks that have returns that are correlated move in tandem for a reason. For example, stocks that are in the same sector, such as Coke and Pepsi are likely highly correlated. Gold and silver, oil and gasoline, as well as many currency pairs are correlated. For example, the GBP and EUR are strong trading partners, so it makes sense to believe that their currencies would be highly correlated.

When two assets are positively correlated, it means that their values move in the same direction. You can calculate this yourself by using a spread sheet like excel. The goal is to compare the changes in the price of each asset over a specific period of time.

Many traders make the mistake of evaluating the price of each assets as opposed to the changes in the price. Make sure, if you are planning to determine if two different assets are correlated, that you compare the returns, as opposed to the price. In excel the formula is called =correl (a,b).

A correlation of one, means that two assets move perfectly in tandem. A correlation of negative one means that the returns of the two assets move in the opposite direction and are inversely correlated.

While correlation describes the returns of two different assets, the statistical measure co-integration describes how well each assets returns are linked, and the strength of their correlation. An example that is often used to describe co-integration is an old man that is walking his dog that is on a lease. The two can move independently, but because they are linked by a leash, there random paths will eventually converge.

An example using securities is as follows. Gold and oil prices might move in tandem for a period of time, but there is no link between the two commodities, so over time, the correlations will break down. Gasoline and oil, on the other hand, are co-integrated, as gasoline is derived from oil. Over time, the two assets will move in tandem and even if the link occasionally breaks down, it will eventual bounce back.

Co-integration is represented in a manner that is similar to correlation. A co-integration of 1, means that the pair is perfectly co-integrated, while a co-integration of -1 means that there is absolutely no co-integration. You can measure the co-integration of a cross currency pair by breaking it down into currency pair versus the US dollar. For example, you could take the GBP/USD and run a co-integration study versus the USD/JPY, to determine if the GBP/JPY is co-integrated.

An example of two stocks that are co-integrated are Visa and MasterCard. Both of these companies operate similar businesses and generally have highly correlated returns. The ratio of these companies stock prices historically trade in a range, but when the ratio moves a specific standard deviation from a mean of the ratio, you can take advantage of the divergence.

Graphing Asset Pairs

Graphing Asset Pairs

One of the best ways to evaluate asset pairs is to look at them on a chart. Currency pairs are the easiest pairs to analyze because the exchange rate used is always reflected as a ratio. For example, the EUR/USD currency pair is Euros divided by dollars.

Nearly all charting software platforms provide currency cross pairs which exclude the US Dollar. Crosses include currency pairs such as the EUR/GBP or the GBP/JPY. If you do not have a software product that charts cross pairs, you can chart them on your own, by calculating the exchange rate. For example, the GBP/JPY cross pair is derived by using the formula, GBP/USD / USD/JPY.

Assets pairs other than currencies generally require some form of software flexibility. You will either need to calculate the ratio on your own or, have a charting software that provides you with this flexibility. When you calculate your pair, you should always use a ratio as opposed to the differential. So you want to use x/y as opposed to x-y.

Pair Trading Strategies

There are two basic types of pair trade strategies; mean reversion and trend following. When employing a forex correlation pair strategy you either believe that a pair that has been moving in tandem will experience a breakdown in its correlation, or you believe that after the correlation has broken down, the pair will revert back to its long term mean. When a pair is co-integrated, it usually means that it will likely move in tandem and if the correlation breaks down, you should expect that it will revert back to its long term mean. If an asset pair is not co-integrated, then you are not sure if it will mean revert, which makes the pair a possible candidate for a trend following strategy.

When evaluating assets for a mean reversion pair strategy, a pair trade becomes attractive when one asset is considered relatively inexpensive or dear relatively to another asset.

A pair strategy is a market neutral strategy and therefore the strategy as a whole is uncorrelated to the broader market indices such as the S&P 500 index. Since the risk you are assuming is a relative value risk, you are taking on exposure that is uncorrelated to market direction. This type of trading strategy allows investors to diversify their portfolios by allocating capital to a strategy other than directional changes in stocks or bonds.

One of the most common types of pair strategies is one that is based on mean reversion. Here you would seek to benefit when highly correlated assets experience a divergence in returns over the short term. You can also trade pairs based on momentum or a trend, similar to the way you would trade an individual asset such as gold. You can also use technical, statistical or fundamental analysis to generate a trading strategy.

Your strategy can be based on a back test of the relationship between two specific assets that are related to determine if a specific standard deviation from a historical mean of their ratio represents attractive levels to purchase one asset and simultaneously sell short another asset.

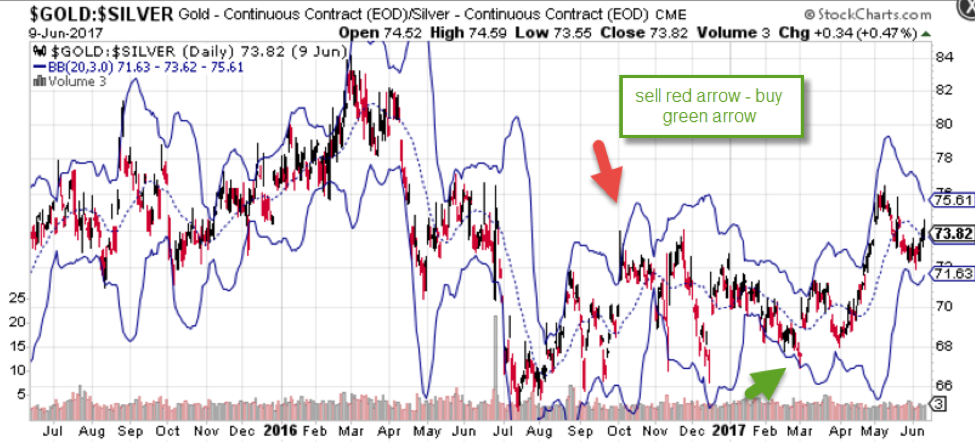

The above chart plots the price of gold divided by silver. It shows that over time, the ratio, gold divided by silver will revert to a long term mean. By using a 3-standard deviation Bollinger band, you can find specific levels where the ratio of gold to silver reaches either the Bollinger band high or Bollinger band low and then expect it to revert back to the long term mean. The green arrow is an example of where you could purchase gold and sell short silver and the red arrow is an example of a level where you could sell short gold and purchase silver.

The relationship between gold and silver is less a function of the price of gold or the price of silver and more of a description of the value of gold in terms of silver. To back test this relationship, you would need to find a system that specializes in back testing pairs. Most back testing software packages are designed to back test a single asset, which include currency pairs. Many are not designed to backtest the purchase of one asset and the simultaneous sale of another asset.

Another way you can tackle this is to create your own product. For example, if you download the closing price of gold and the closing price of silver over a specific period, you can create your own asset called gold-silver, by dividing silver into gold. If you are only planning on back testing the closing price of the pair, then this methodology can work. If the software package you use allows you to upload your own instruments, you can back test your new instrument gold-silver.

Statistical Arbitrage Pairs Trading

There is a trading strategy known as statistical arbitrage which is a form of intra-day pair trading, that is pair trading using a quantitative method.

This strategy takes advantage of pairs that are co-integrated where the relative value changes intra-day. This could be a standard pair, such as gasoline versus crude oil or even a pair between a futures contract of a currency and a cash currency pair. Another example might be the dollar index versus the EUR/USD.

Statistical arbitrage is strategy that is a favorite among hedge funds. They use high powered technology to find pairs that are out of kilter and try to take advantage of these abnormalities. You can attempt to develop your own statistical arbitrage methodology, but in many cases, the speed of your transaction can be the difference between a profitable trade and one that is unsuccessful.

A second style of pair trading is trend trading. Here you can use your favorite trend strategy to determine if the ratio between the two assets is breaking out. If you want to catch the middle of a trend, you can use a moving average crossover strategy. This could include either a simple or exponential moving average based on how much you want to weigh the current ratio.

If you want to determine the momentum of the ratio, you can use a momentum oscillator such as the moving average convergence divergence (MACD). This momentum indicator will evaluate the momentum of the ratio, and generate a crossover buy or sell signal which will help you determine if the ratio is breaking out as the correlation between the two securities is breaking down.

Executing Pair Trades

There are several ways to execute a pair trade. If you are currency pair trading, you can easily purchase and sell a currency pair, or even use the options market. If you are futures pair trading, you will need to purchase one asset, such as gold, and simultaneously short another assets, such as silver.

While the execution of a cross currency pair transaction is straight forward, the notional amount that you should trade when you are executing a pair of two different assets, can be tricky. The goal is to execute the identical notional amount. For example, you would calculate a pair trade of gold and silver as follows:

You would start by determining the notional quantity that you want to trade. Let’s assume that you want to transact a trade that is $5,000, when gold prices are at $1,250 per ounce and silver is trading at $17 per ounce. You would divide $5,000 by $1,250 and get an answer of 4 ounces of gold. When divide $5,000 by $17, the price of silver, you get 294, which is the number of ounces of silver. This assumes a cash trade, but this process would be similar if you were executing a futures trade or an ETF trade.

In many cases, you will not find a contract that is 4 ounces of gold, but this calculation shows you the ratio of gold to silver based on the notional quantity you might be interested in trading.

An alternative way to transaction a pair trade is using options. If your strategy evokes pairs trading with options on a currency pair, and you believe it will move higher, you can purchase a call option, which is the right but not the obligation to purchase a specific quantity on or before a certain date. Alternatively if you are looking to sell a currency pair, you could purchase a put option which is the right but not the obligation to sell a specific quantity on or before a certain date.

When you purchase an FX option, you are paying the option seller a premium, for the right to either buy or sell a currency pair. Your call option will expire worthless if the exchange rate is below the strike price at expiration. Similarly, your put options will expire worthless if the exchange rate is above the strike price at expiration.

If you want to use options on two different instruments, you need to completely understand the risks that you are taking before you enter each transaction. While some brokers offer options on pairs, the process of buying and selling calls and puts on two different pairs generates multiple risks that are not always easy to manage for an inexperienced trader.

Pair Trading Software

There are several pair trading software programs that focus on ETFs and stocks. These software packages will help you find asset pairs that are highly correlated, and provide a back testing module that shows you how the strategy has performed over a number of years.

Some have their own pair trading algorithm, while others allow you to calculate your own pair trading model. One of the benefits of using a pair trading software is that it can help you find, backtest, and monitor the pairs.

Summary

Pairs trading is considered a market neutral form of trading that is uncorrelated to common wealth enhancing investing such as stock and bond trading. The pairs that you use in your strategy can range from currency pairs, to assets such as commodities, indices or even stocks.

The strategies that are often employed range from mean reversion quantitative strategies to trend following momentum breakout strategies. When you graph a pair, the most efficient way to analyze the pair is by dividing one asset by another. The exchange rate of a currency pair is always reflected as one asset divided by another, but you will need specific software to help you do this if you are planning to trade commodity, index or stock pairs.

You can execute a pair trades in a variety of ways including over the counter products, futures or options.